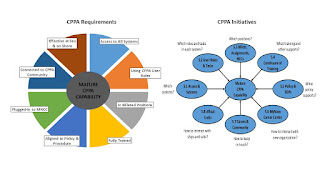

In 2012, the Navy reorganized the administration of pay and personnel under a consolidated organizational hierarchy. Part of the reason for the reorganization was to improve the capabilities and standardization of the Command Pay and Personnel Administrators (CPPA). Work began immediately on improving systems for CPPAs, improving training for CPPAs, improving policy for CPPAs, etc. The work was somewhat unbalanced though, because some streams of the development could proceed much more rapidly than others. In fact, the difficulty surrounding lack of synchronization among the development streams created significant friction on more than one occasion. Because the pace of improvements accelerated in 2017-2018 to coincide with other developments around the domain, these lack of synchronization difficulties became unbearable. Through a series of weekly meetings with a cross-section of stakeholders, we carefully documented requirements for a mature CPPA capability and th